Open-Ended Response Quality: How We Spot AI-Generated Answers

Open-ended questions are very useful parts of a survey. They let people explain things in their own words and share details you might not specifically ask about.

They’re also more likely to be affected by AI-generated answers.

As tools like ChatGPT have become more common, we see more open-ended responses that look unusually polished. They’re well written, neatly structured, and cover a lot of ground. For research, these answers aren’t helpful.

(For context on the broader data quality challenge, see our post on when bots take surveys.)

Why AI Responses Are a Problem

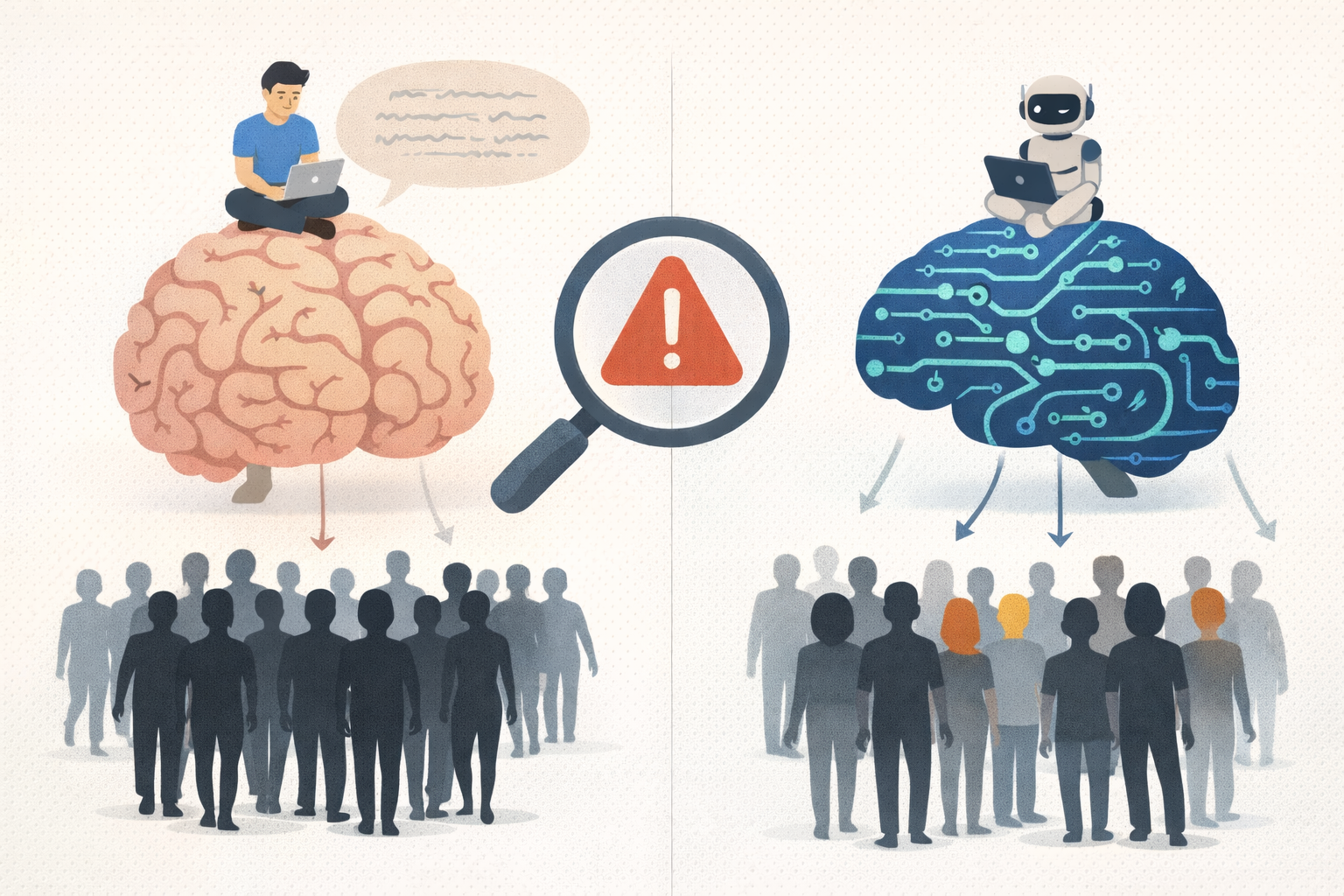

When someone pastes your survey question into ChatGPT and copies the answer back, you're not learning what that person thinks. You're learning what an AI thinks a good answer looks like.

The insights from AI-generated responses aren’t real consumer perspectives. They’re based on AI training data and the patterns the model has learned to produce. They’re bad data.

Patterns We Look For

After reviewing thousands of open-ended responses, we've developed an eye for AI-generated content. Here's what raises red flags:

Unnatural structure: Some answers follow a very organized structure, with a clear introduction and conclusion. Most people do not respond to surveys this way.

Generic language: Some use general phrases that sound formal or academic. These phrases appear often in AI-generated text and rarely in natural responses.

Excessive thoroughness: AI-generated answers also tend to include more points than necessary. In surveys, most people give short, direct answers.

Lack of personal voice: These responses often lack personal detail. Real answers usually include specific experiences, opinions, or wording that sounds informal or uneven.

Mismatch with other responses: If someone gives low-effort, single-word answers to other questions but suddenly produces a polished paragraph, that inconsistency is telling.

Our Review Process

We don't rely on AI detection tools alone because they’re not reliable enough.

Instead, we use a multi-layered approach (detailed in our data quality checklist for researchers):

We compare open-ended answers to related closed-ended questions to check for consistency.

We look at how long the response took to write, since long answers submitted very quickly are unlikely to be real.

We also look for repeated or very similar wording across responses.

Final decisions are made by experienced researchers reviewing the responses.

Prevention is key

Reviewing data after the fact is important, but prevention also matters.

Ask specific, personal questions: "Describe the last time you purchased [category]" is harder to fake than "What do you think about [category]?"

Request details that require experience: "What was frustrating about that experience?" prompts authentic answers better than generic opinion questions.

Vary question format: Mix short-answer questions with longer ones. People who rely on AI often use it for every open-ended question.

The Stakes Are Real

Bad open-ended data can actively mislead. If 20% of your responses are AI-generated, your analysis will surface "insights" that are AI patterns rather than customer perspectives. Decisions can be built on a flawed base.

Maintaining data quality for open-ended questions requires effort, but it is necessary for research that can inform real decisions.

(For more on how bad data impacts decisions, see The Hidden Cost of Bad Data.)